Give teams what they need without losing control.

Parabola is an AI-powered workflow builder that empowers teams to build reports, automations, and custom logic on top of your existing systems—without creating more work for data and engineering.

Custom reports and one-off asks distract from high-priority work, but no one wants to be the one to say no. Empower non-technical teams to build their own automations without adding to your queue.

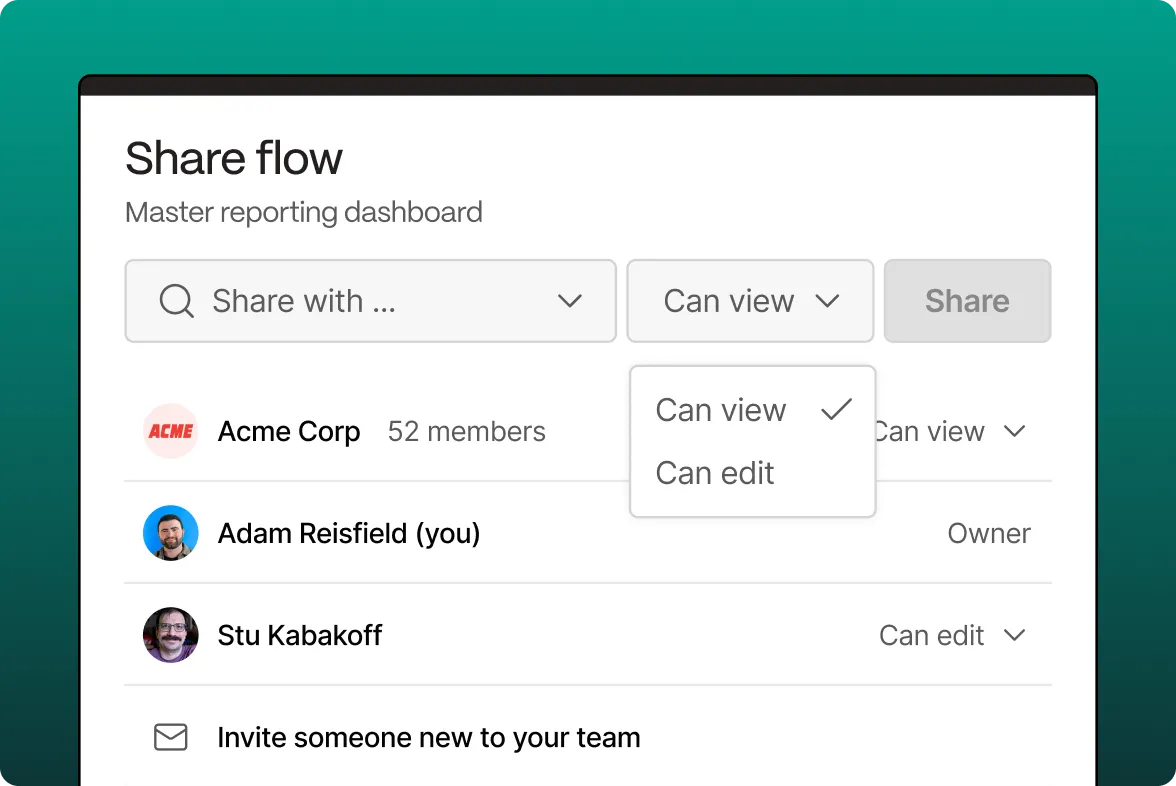

Business teams don’t want to adopt AI alone… but they will if they have to. Be the partner that helps them adopt AI safely and effectively with the guardrails, access, and advice they need.

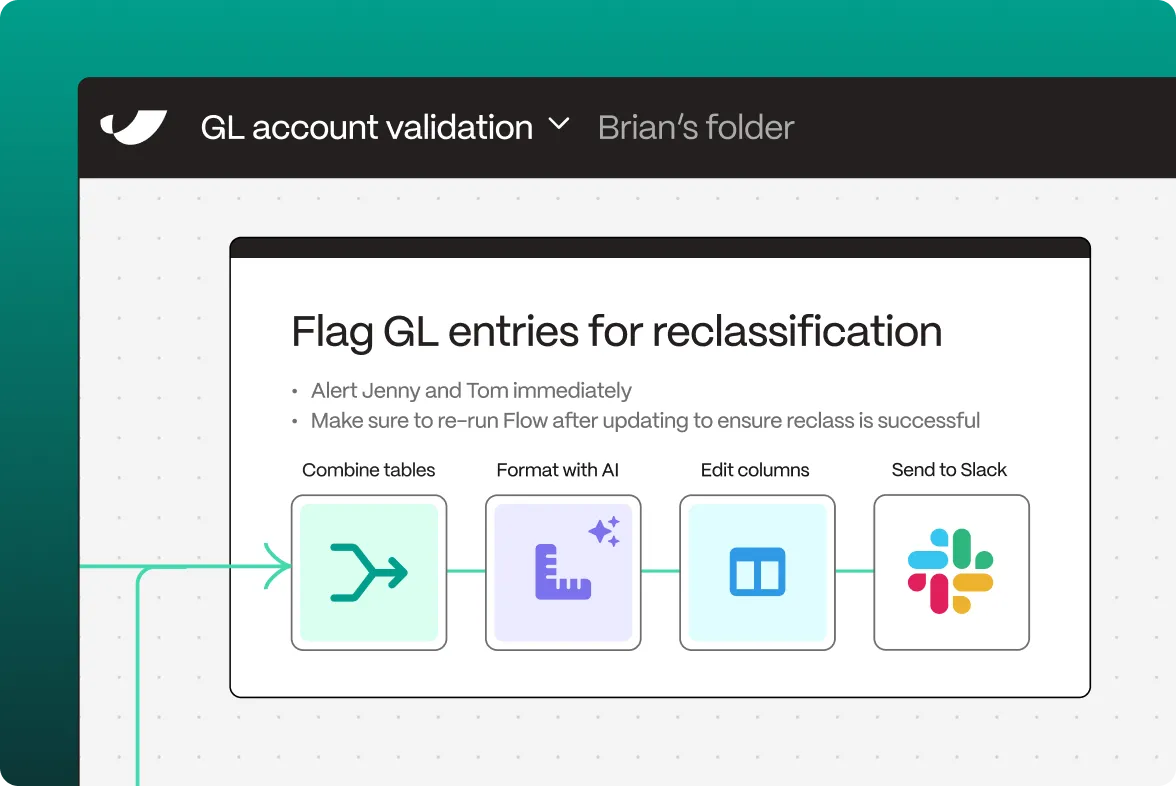

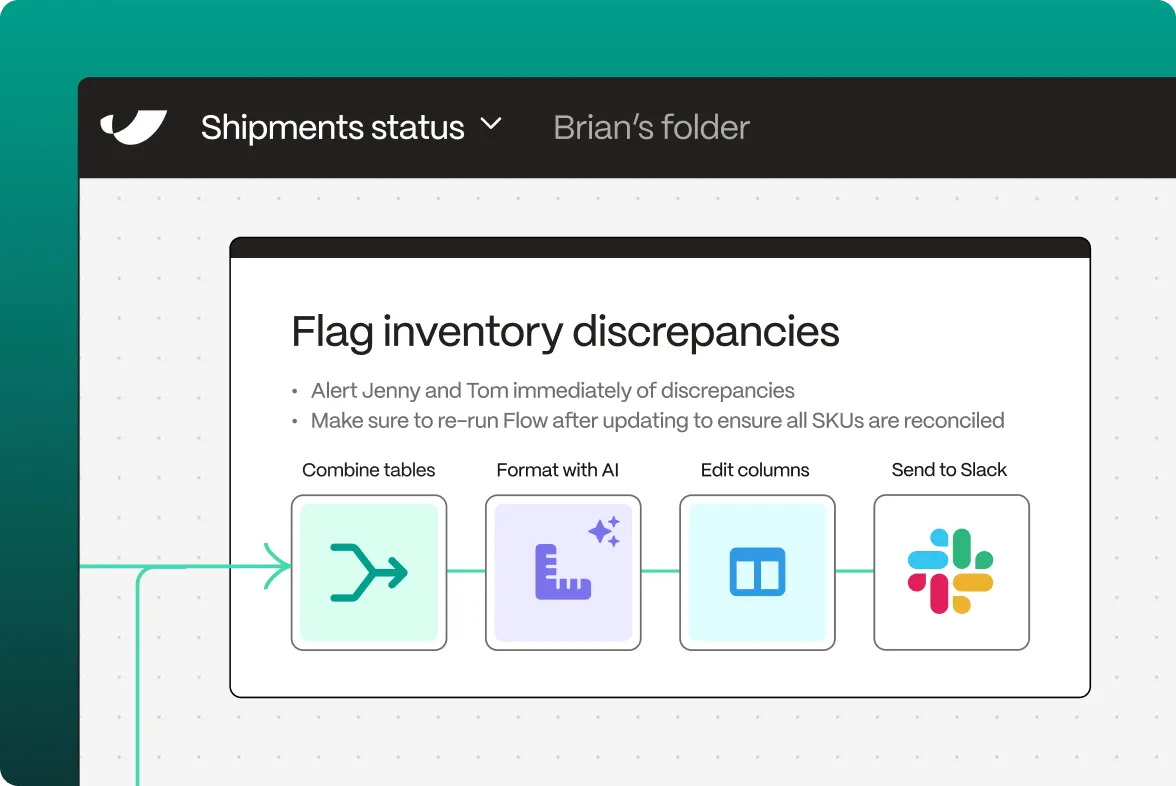

When teams upload CSVs to ChatGPT, there’s no visibility into how your data’s being changed. With Parabola, every workflow is auditable, permissioned, and version-controlled—just like code.

Empower your business teams to

build scalable processes.

See what our customers have to say.

Improve governance and move 10x faster.

Prove ROI before you're invoiced.

Too many teams commit to software they never end up using. That’s why we offer a 30-day proof of concept. Build something real with our team and only become a customer if there’s value.

Turn messy data into automated workflows.

Connect any system, automate every workflow.

Don't just take our word for it.

See how leading brands use Parabola to automate their complex data workflows.

Frequently asked questions

Yes. Parabola is SOC2 Type II compliant and trusted by brands like Flexport, Coca-Cola, Brooklinen, and On Running.

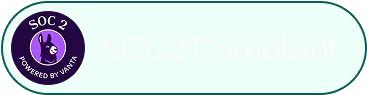

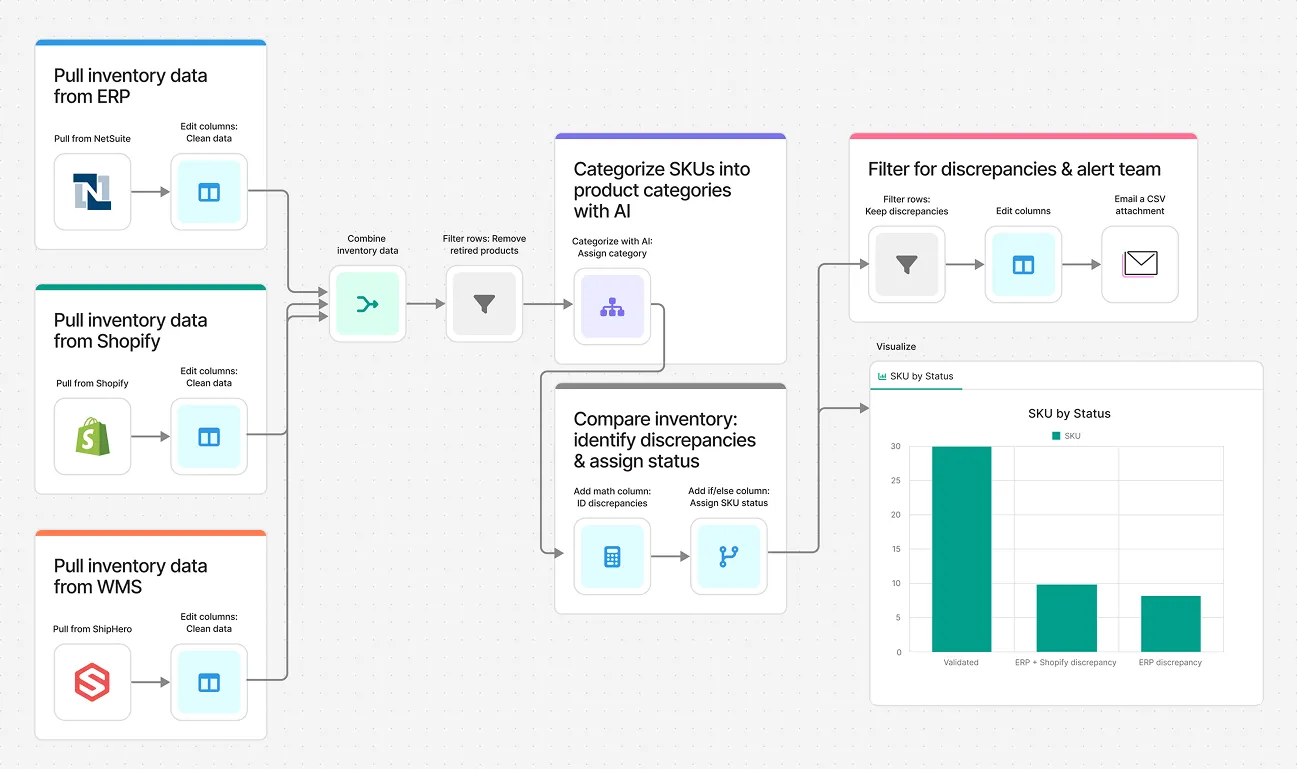

Parabola integrates with virtually any system. In addition to 50+ native integrations like NetSuite and Shopify, Parabola offers an API and the ability to integrate via email—making it easy to connect to systems like ShipHero, Snowflake, Redshift, FTP folders, and more. You can also connect to thousands of tools and work with unstructured data like emails and PDFs.

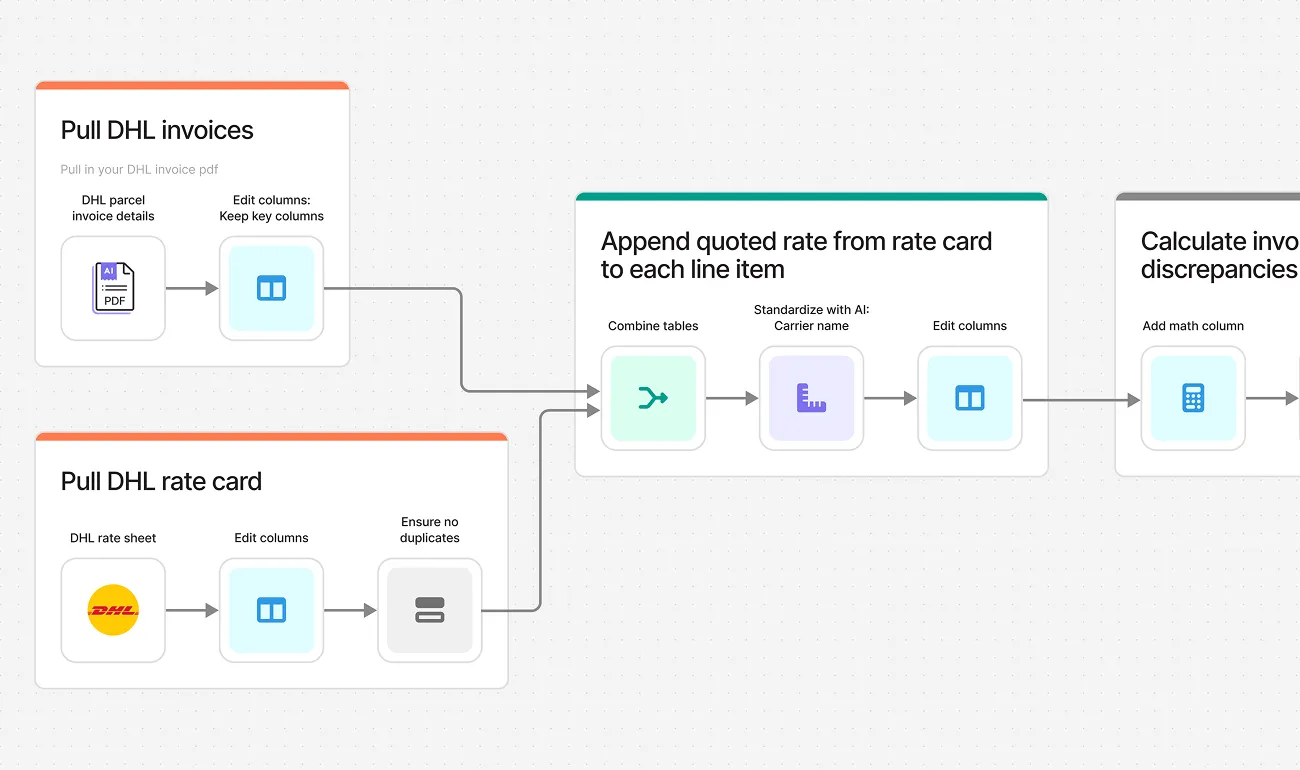

The best Parabola use cases are recurring processes that involve complex logic and messy data coming from multiple data sources.

Parabola is most commonly deployed across operations, supply chain, finance, accounting, procurement, and data teams. Any team regularly working with data can see value from Parabola by working faster with improved visibility.

We offer two approaches to starting with sample data. After signing up for an account, you can ask Parabola, “Help me get started with sample data,” and you’ll be provided with a selection of 10+ sample datasets. Alternatively, use our secure anonymization tool to strip your data of sensitive information before uploading.

Finance and accounting teams across On Running, Cart.com, Brooklinen, Faherty, and Flexport use Parabola to automate the work they thought would always be manual. Explore more on our customer stories page.

.png)